Navigating the AI wave

A think tanker's guide

By Joscha Wirtz, Sonia Jalfin and María Belén Felix

Winters, waits and waves

The story of Artificial Intelligence (AI) is a story of winters, waits and waves. Since the 1950s, when the first definitions of AI emerged, we have seen two AI winters - periods when the technology has failed to live up to expectations. Logically, many remain skeptical and choose to wait before committing, which introduces the wait problem: When did I wait too long? The current debate suggests that AI will not fundamentally change the nature of work in the short term, but will accelerate a process of fragmentation in terms of skills, levels of access and control. It brings with it new ethical challenges, which can quickly lead to compounding risk that is difficult to manage. This article focuses on why and how think tanks should responsibly integrate AI into their organization now - and proactively mitigate risks.

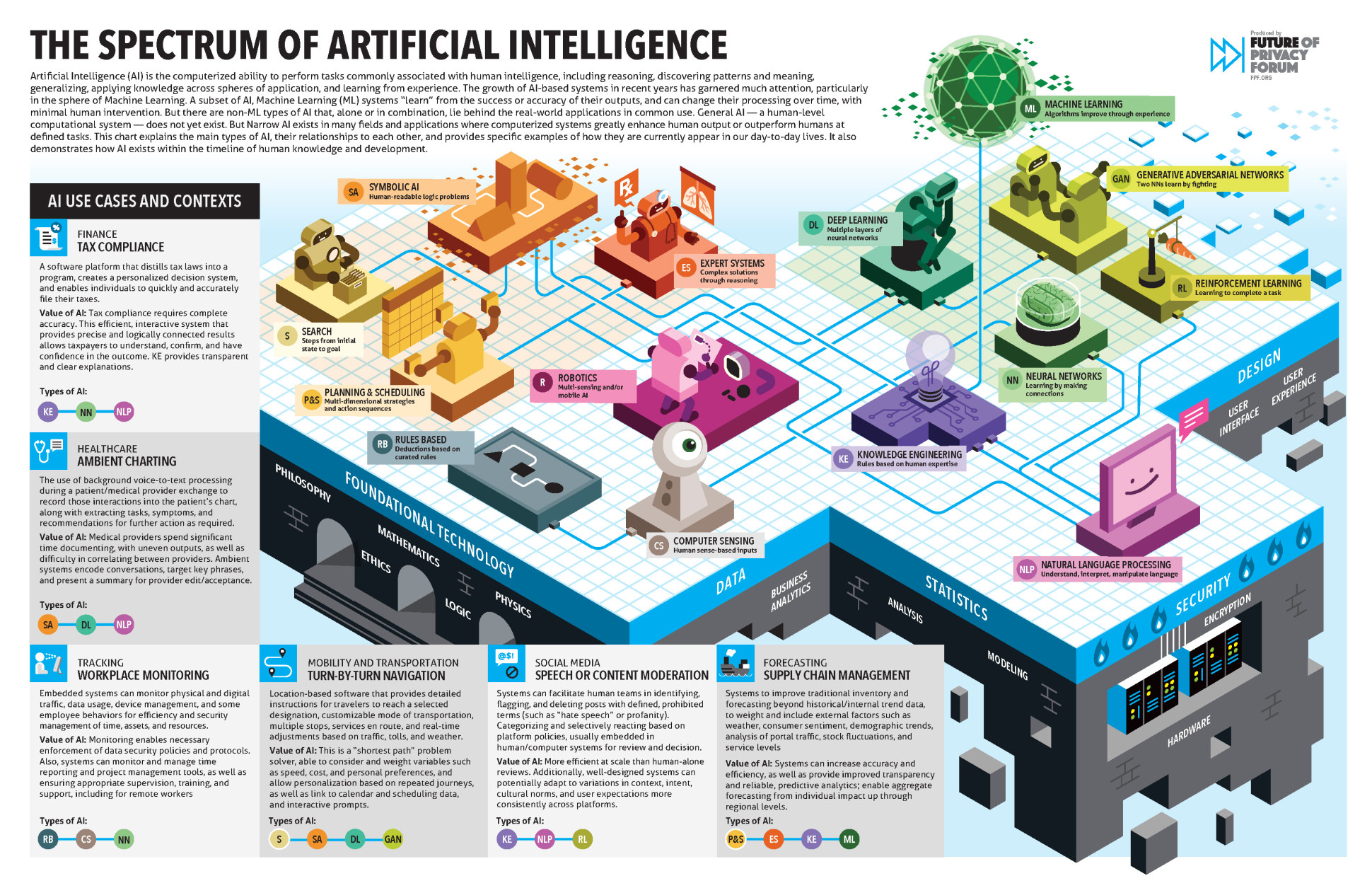

AI is the simulation of human intelligence in machines programmed to think and learn like humans. It involves the creation of computer systems and algorithms that can perform tasks that normally require human intelligence, such as visual perception, speech recognition, decision making, and language translation. The current wave is the wave of Generative AI, a subset of AI.

It is apparent that there is a wide range of AI concepts, technologies, and associated applications. To get a feel for the Spectrum of AI, we recommend this amazing infographic created by the Future of Privacy Forum.

Imagine a future in which think tanks are empowered to make informed strategic decisions about when and when not to use AI-enabled technologies - moving from FOMO to intentionality.

However, why should they care?

Understanding AI in the context of think tanks

Regardless of the political and societal landscape in which they operate, think tanks face an increasing number of exponential gaps. This term refers to the disparity between the rapid pace of change in key areas of transition and the slow pace at which society has been able to adapt to and regulate these changes. This is particularly evident in our information ecosystems: As they come under increasing stress and shift from functionality to ideology, the ability to monitor, understand, and contribute to their health is critical. Given their importance for evidence-based policymaking and their influence on public opinion, think tanks need to understand, challenge, and ultimately embrace emerging technologies like AI that can fill or widen these gaps for two reasons:

- Extended Responsibility of Think Tanks: Think tanks need to understand the risks and opportunities of AI, as it will first and foremost affect the core logic of the political and societal systems they operate in.

- Characteristics of the Think Tank Business Model: Think tanks need to understand the risks and opportunities of AI, as their processes and products are vulnerable to easy yet irresponsible automation (aggregate - write - publish).

Let's look at two recent examples. The first example is a political campaign that uses AI to target specific audiences and persuade them, adding another layer of complexity to elections. The second example is an AI-powered strategic intelligence platform with access to parliamentary research services, changing the way knowledge is aggregated for decision-makers. What do these examples mean for your own organization and how would you “skill up” in response to this new reality?

Finding a comprehensive summary of the most significant advancements in AI research, industry, safety, and politics can be challenging. To get you started, we would like to suggest these resources: The EU Approach to Artificial Intelligence, The EU’s AI Watch Platform, Stanford’s AI Index, the OECD AI Policy Observatory, TIME’s 100 Influential People in AI List, and the European AI & Society Fund’s List of Experts.

Navigating the AI integration process

We call for a holistic approach to incorporating AI into think tanks. Presently, AI exploration in most think tanks is driven by individuals. Critical knowledge can become isolated, leading to increased fragmentation of the workforce. Without organizational guardrails and the establishment of best practices, key AI-related risks cannot be mitigated: Loss or misuse of intellectual property, reproduction of bias, increase in indirect environmental footprint - to name a few. We propose the following three phases for integrating AI into think tanks:

Phase 1 - Setting the stage intentionally

The journey to AI integration begins with an assessment of organizational and individual readiness. This could include the current digital infrastructure and its capabilities with respect to AI, or mapping existing digital skills and skill gaps across the organization. To help structure this process, we recommend selecting a digital literacy framework (see box below) and creating a simplified think tank map to help structure organizational functions, processes, products, and intersections with external stakeholders.

Next, setting an ambition level guides the depth of AI adoption. A clear and transparent decision at the executive level is a minimum requirement, as transferring parts of the workload to AI assistants raises fundamental questions about the future of work. This can lead to significant uncertainty among the workforce, which should be proactively mitigated.

The level of ambition is linked to the organization's values, in particular its interpretation of organizational responsibility. It can be translated into various supporting resources, such as a code of conduct, prompt libraries, and review cycles for AI-generated content, essentially integrating AI sensitivity into existing compliance and quality assurance mechanisms.

Understanding readiness (where we are) and ambition (where we want to be) enables the design of an AI learning journey for the organization and the individuals within it.

Phase 2 - The beginning of an AI learning journey

Depending on their subject area, size and geographical location, think tanks face challenges such as fundraising, disruptive changes in the political and societal context, lack of visibility and organizational performance. These challenges provide perfect use cases for future AI assistants. Embedded in the readiness assessment findings and responsible use guardrails, these use cases are more than patches: They become part of an organization's digital transformation.

Common examples include AI-assisted research, programming, and communications, which can be explored on major platforms such as OpenAI's ChatGPT, Microsoft's Copilot or Bing integration, Google's Gemini, Anthropics Claude, Cohere’s Coral and Aya and cross-platform services like POE. The number of services built on these major models is huge, we suggest using one of the AI service search engines to scan the landscape (see box below).

It is important to consider the following when exploring AI solutions: Most of us are "stuck in the front end", meaning we do not have the ability to understand or change the core of the systems. For this reason, you may want to think of AI tools as interns that need precise guidance and can still get things wrong or focus on open models (see box below). Finally, whatever your initial impression of the usability of AI systems, they are rapidly evolving.

This journey can equip think tanks with the knowledge, tools, and strategies they need to effectively integrate AI into their research and policymaking processes. As a result, they can realize productivity gains and lower the barriers to using digital tools in research through Generative AI's focus on natural language. By understanding the technologies and their limitations, think tanks can proactively manage the change process and create an appropriate work environment for their staff and stakeholders.

Phase 3 - Cross-organisational activities

Building on these improvements in their current operations, new opportunities are emerging in the form of cross-organisational activities: Think tanks could build a content verification system for science-based policy insights, establish direct links to knowledge and information management systems used in institutions around the world, and contribute training data to specialized AI models.

The current wave of AI is in full swing, and the implications are huge. Let's not wait, and let's build the skills we need to keep our systems healthy.

To get started on your own learning journey, check out these resources: DQ Institutes Digital Intelligence Mapping, The global landscape of AI ethics guidelines | Nature Machine Intelligence, The AI Campus, Open LLM Leaderboard, There’s An AI For That.

The authors offer a co-learning program for AI in think tanks. If you would like to learn more about the program, please contact [email protected].

Joscha Wirtz

Digital Strategy Lead and Scientific Consultant at the Wuppertal Institute for Climate, Environment and Energy

Joscha is Digital Strategy Lead and Scientific Consultant at the Wuppertal Institute for Climate, Environment and Energy, a leading German think tank for sustainable transition research and consultancy, where he works with organizations across Europe to enable the twin transition. Previously, he led the Responsible Research and Innovation Division at RWTH Aachen University and co-founded an NGO hub to support grassroots initiatives that contribute to sustainable change. Joscha holds an M.Sc. in Environmental Engineering from RWTH Aachen University.

Sonia Jalfin

Founder and director at Sociopúblico

Sonia has more than 20 years of experience working to position and communicate public policy research. She founded and now directs Sociopúblico, a strategy and communications studio for complex ideas. She has partnered with the World Bank, UN agencies and many of the most relevant think tanks and NGOs in the world, from Brookings Institution to Save the Children, from ODi to the Center for Global Development, among many others. She holds a degree in Sociology from the University of Buenos Aires and a M.Sc. in Media and Communications from the London School Economics, where she received the Merit Award. She is a regular commentator on innovation at La Nación newspaper and hosts the podcast Meet the Influectuals.

María Belén Felix

Project manager at Sociopúblico

María is a project manager at Sociopublico. Her work focuses on digital communication and campaigning for organizations that address some of the most pressing issues around education, health and climate change. She is also Communications Director at FARN, an argentine based NGO that advocates for environmental justice. María studied Education and Journalism in Buenos Aires, Argentina.